AI governance in 2026: frameworks are not enough anymore

AI governance used to sound like something you could solve with a framework, a review board, and a policy document nobody reads. In 2026, that is not enough. As Microsoft (opens in new tab) put it in April, many governance models were built for a world that no longer exists - one where AI stayed within neat boundaries rather than moving across apps, data sources, and workflows at production speed. At the same time, Diligent (opens in new tab) reports that only 29% of organizations have comprehensive AI governance plans, even though technology is now the top risk concern for 60% of legal, compliance, and audit leaders.

That is why so many companies feel stuck. They do have policies. They do have approval steps. They may even have principles on the wall. But governance breaks down when it lives in decks, email threads, and manual reviews while product teams are shipping live AI features. NIST’s AI Risk Management Framework (opens in new tab) is useful here above all because it treats governance as a cross-cutting function that should shape how teams govern, map, measure, and manage risk throughout the AI lifecycle. NIST (opens in new tab) is also clear that its playbook is voluntary and not a one-size-fits-all checklist.

For companies building custom software, that changes the real question. It is no longer, “Do we have an AI policy?” It is, “Where can this system act, what data can it touch, when does a human step in, and how will we know when it is wrong?” Microsoft’s (opens in new tab) April 2026 guidance makes the same point in practical terms - governance has to be risk-based. A low-risk internal productivity assistant should not be governed the same way as a system connected to core business operations, sensitive data, or meaningful business actions.

That shift matters because not all AI risk looks the same. The EU AI Act (opens in new tab) follows the same basic logic with a risk-based structure, and its transparency rules are set to apply from August 2026. In other words, this is no longer just good internal discipline. It is increasingly part of the environment in which companies will be expected to operate.

So what does better governance look like in practice?

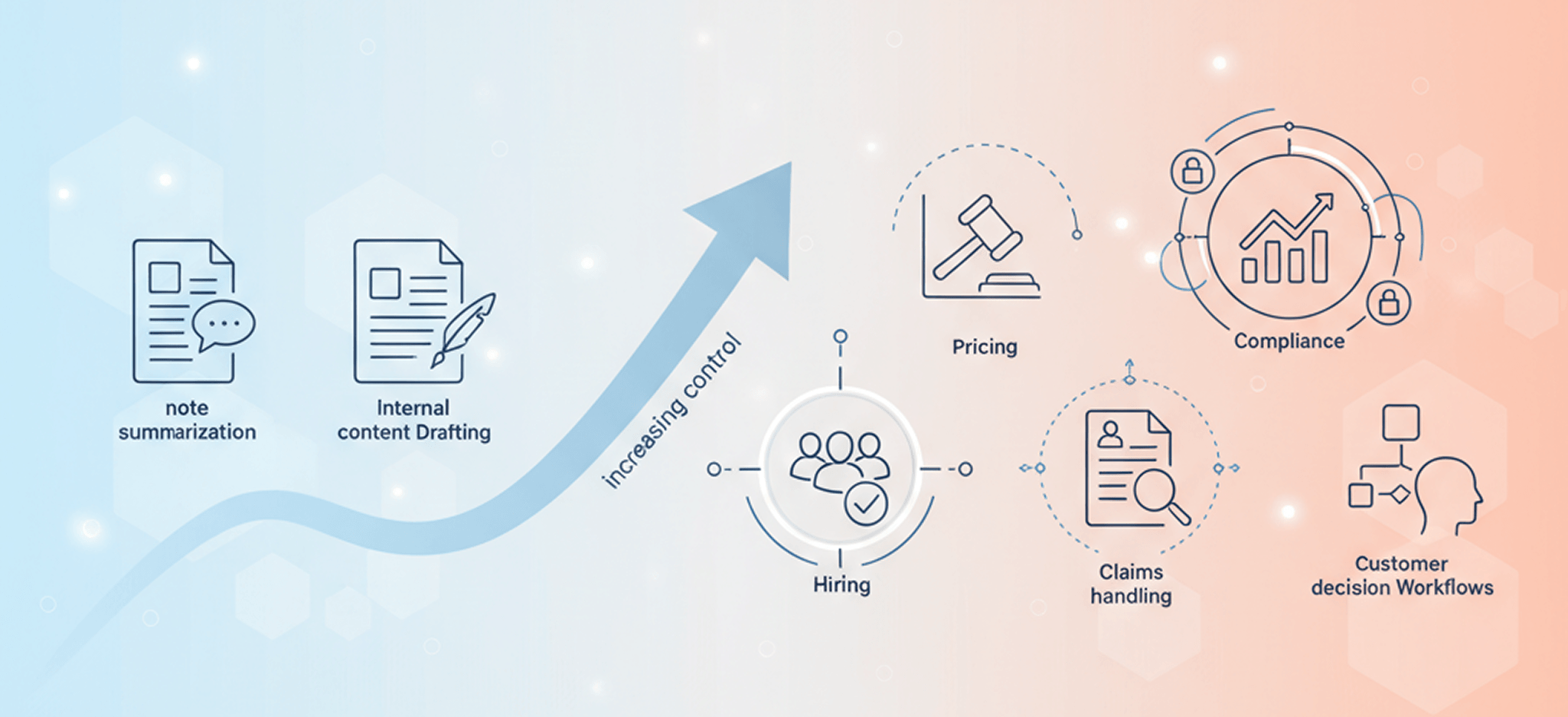

First, it starts with the real use case, not the abstract principle. If an AI feature helps summarize notes or draft internal content, that is one level of risk. If it influences eligibility, pricing, claims, hiring, customer communications, or compliance decisions, that is another. Good governance reflects those differences instead of forcing every use case into the same approval lane. Microsoft (opens in new tab)’s April framework calls for exactly this kind of graduated risk model, where control increases with sensitivity, reach, and impact.

Second, governance has to show up inside the product and platform itself. Microsoft (opens in new tab) says this plainly - governance works when it is enforced by the platform, not layered on later through policy decks, emails, or spreadsheets. That means access controls, sharing rules, logging, approval gates, connector restrictions, escalation paths, and lifecycle management cannot be side documents. They have to be part of how the system actually behaves.

Third, governance should be measured by outcomes, not paperwork. NIST (opens in new tab) encourages organizations to evaluate whether their approach improves policies, practices, implementation plans, indicators, measurements, and expected outcomes. That is a much stronger standard than “we wrote the document.” It pushes teams to ask better questions: Can users tell when AI is involved? Can risky outputs be reviewed fast? Can decisions be explained? Are incidents logged in a way that helps improve the system instead of hiding the problem?

At MYGOM (opens in new tab), this is where a story-first approach becomes useful. Not story as marketing. Story as software design. Before launch, we should be able to map the real journey - who is relying on the output, what decision the AI is shaping, where confidence drops, where a human can step in, what gets recorded, and what the user is told. That kind of narrative thinking turns governance from an abstract compliance exercise into something product teams, compliance leads, and business stakeholders can all work with. It also lines up with NIST (opens in new tab)’s emphasis on continuous risk management and multidisciplinary perspectives throughout the lifecycle.

This matters even more now because AI systems are becoming more agentic. Microsoft (opens in new tab)’s April 2026 work on agent governance points to a world where AI is no longer just answering questions in a chat window, but taking actions across tools and systems. OWASP’s Top 10 for Agentic Applications 2026 (opens in new tab) reflects the same shift, highlighting risks such as tool misuse, identity abuse, memory poisoning, and cascading failures in autonomous systems. If your governance model was built for a single model behind a simple interface, it will not be strong enough when AI starts acting across environments.

The companies that will handle this well are not the ones with the thickest policy binder. They are the ones that can connect governance to product design, access control, human oversight, logging, escalation, and clear user communication. That is what trustworthy AI looks like in practice. Not more theory. Not more slogans. Just better systems, better controls, and fewer surprises in production.

If you are building AI into customer support, operations, finance, compliance, or internal workflows, start there. Map the journey. Classify the risk. Decide the human handoff. Log what matters. Make the rules visible inside the product. Frameworks still matter. But in 2026, they only matter if they change what actually happens in production.

And if you need help - reach out to us (opens in new tab). We can help.

Gabriele J.

Marketing Specialist